The Smithsonian Institution, Columbia University and the University of Maryland have pooled their expertise to create the world’s first plant identification mobile app using visual search—Leafsnap. This electronic field guide allows users to identify tree species simply by taking a photograph of the tree’s leaves. In addition to the species name, Leafsnap provides high-resolution photographs and information about the tree’s flowers, fruit, seeds and bark—giving the user a comprehensive understanding of the species.

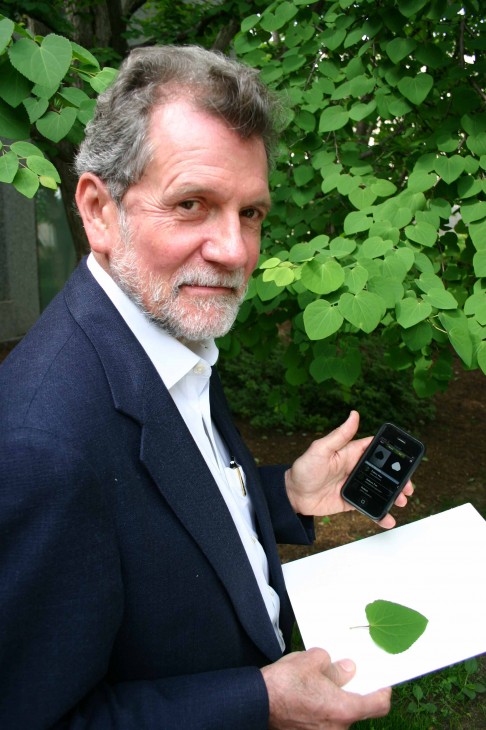

Smithsonian botanist John Kress uses the new mobile app to correctly identify a katsura tree (Cercidiphyllum japonicum) growing in the Smithsonian’s Enid A. Haupt Garden on the National Mall in Washington, D.C.

“We wanted to use mathematical techniques we were developing for face recognition and apply them to species identification,” said Peter Belhumeur, professor of computer science at Columbia and leader of the Columbia team working on Leafsnap. “Traditional field guides can be frustrating—you often do not find what you are looking for. We thought we could redesign them using today’s smartphones and visual recognition technology.”

David Jacobs of the University of Maryland and Belhumeur approached John Kress, research botanist at the Smithsonian’s National Museum of Natural History, to collaborate on remaking the traditional field guide for the 21st century.

“Leafsnap was originally designed as a specialized aid for scientists and plant explorers to discover new species in poorly known habitats,” said John Kress, leader of the Smithsonian team working on Leafsnap. Kress was digitizing the botanical specimens at the Smithsonian when first contacted by Jacobs and Belhumeur, so the match between a botanist and computer scientists came at a perfect time. “Now Smithsonian research is available as an app for the public to get to know the plant diversity in their own backyards, in parks and in natural areas. This tool is especially important for the environment, because learning about nature is the first step in conserving it.”

In addition to identifying and providing information about plants, Leafsnap also can map a specific plant’s location and save the location for future reference. (Photos by John Barrat)

Users of Leafsnap will not only be learning about the trees in their communities and on their hikes—they will also be contributing to science. As people use Leafsnap, the free mobile app automatically shares their images, species identifications and the tree’s location with a community of scientists. These scientists will use the information to map and monitor population growth and decline of trees nationwide. Currently, Leafsnap’s database includes the trees of the Northeast, but it will soon expand to cover the trees of the entire continental United States.

The visual recognition algorithms developed by Columbia University and the University of Maryland are key to Leafsnap. Each leaf photograph is matched against a leaf-image library using numerous shape measurements computed at points along the leaf’s outline. The best matches are then ranked and returned to the user for final verification.

“Within a single species leaves can have quite diverse shapes, while leaves from different species are sometimes quite similar,” said Jacobs, a professor of computer science at the University of Maryland. “So one of the main technical challenges in using leaves to identify plant species has been to find effective representations of their shape, which capture their most important characteristics.”

The algorithms and software were developed by Columbia and the University of Maryland, and the Smithsonian supervised the identification and collection of leaves needed to create the image library used for the visual recognition in Leafsnap. In addition, the not-for-profit organization Finding Species was hired and supervised by the Smithsonian to acquire the detailed species images seen in the Leafsnap app and on the Leafsnap.com website.

The app is available for the iPhone, with iPad and Android versions to be released later this summer.